The Antisocial Distancing Ensemble

Sound Circuits 2021, Lisbon (PT)

The Antisocial Distancing Ensemble was set out to confront the current attitudes towards the COVID-19 pandemic and positional sensibility with the other. The public intervention is designed to challenge the conventional dualisms between physical distancing and social connectedness, mediating collective interaction with mobile sound feedback.

Moving Digits

Artistic Residency and Performance, STL, Tallinn (EE)

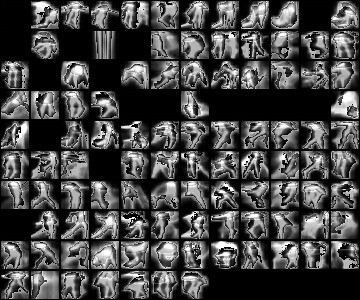

Moving Digits: Augmented Dance for Engaged Audience aims to enhance audience understanding and engagement in contemporary dance performances, and to allow to experience dance in an augmented way (even after the performance). The project also aims to empower dancers, choreographers and technicians with further tools for expression, archival and analysis.

The Liquid Interface for Musical Expression (LIME)

EditedArtsDemonstration and Audio Description Project Blog

UNLOOP - Mini Documentary

UNLOOP, de David Negrão & Sara Montalvão is an interdisciplinary and participatory project created in the context of of two artistic residencies in Alentejo - São Luís, Odemira and Montemor-o-Novo, in October and November 2021.

ADDA

A Musical performance using embodied technologies. We designed a set of sensory smart gloves that will track the spacial movements of the performer's hands along with the relative position of the fingers, use to detect different types of motion and gestures. When used in conjunction, the performer is able to control the sound output. Simultaneously, we are controlling a modified TENS muscle stimulator, overriding the performer's control of movement when a current is imposedCollaboration with Vytautas Niedvaras AD/DA: Electronic Sound Installation & Performances

Dance2Vec Thesis

Full PDF |

Interactive Visualizer

Full PDF |

Interactive Visualizer

False Efficiency

Collaboration with Rosie Gibbens

"Using sensors and microphones on my body and the table, an unsettling soundscape is created as I perform the gestures.

It builds in intensity at moments of increased movement. The stamp cues whispered phrases from Ivanka’s book.

These were chosen by me for their corporate jargon, aspirational nature or gendered associations"

Shown as part of the ‘Youth Board Presents’ at Toynbee Studios. April 2018. Photos by Greg Goodale.

· ·

Contact / Pages

weselle [at] protonmail.com LinkedIn Google Scholar GitHub Resident Advisor StravaBio

-

William Primett is a researcher and designer based in Tallinn, Estonia collaborating with dance-technology research group, MODINA, specialising in Movement Computing and Computational Creativity. Previously, affiliated with the AffecTech consortium supported by the Marie Sklodowska-Curie Innovative Training Network, pursuing the design of wearable technologies for emotional understanding in consideration of mental health disorders. The outcomes of which contributing to their PhD thesis “Non-Verbal Communication with Physiological Sensors: The Aesthetic Domain of Wearables and Neural Networks” (2023), advocating for non-representational biofeedback appropriate for interpersonal dialogue. William’s current research aims to develop textile-based paradigms for self-expression, intended to project biologically meaningful information, unbounded to clinical environments.

Skills

-

C++, Python, Node.js, Tensorflow, Keras,

openFrameworks, Processing, ChucK, Autodesk,

Unity, Max/MSP, Ableton Live, Adobe Suite